publications

publications by categories in reversed chronological order. generated by jekyll-scholar.

2026

- Preprint

Examining Risks in the AI Companion Application EcosystemNatalie Grace Brigham, Lucy Qin, and Tadayoshi KohnoPreprint, Mar 2026

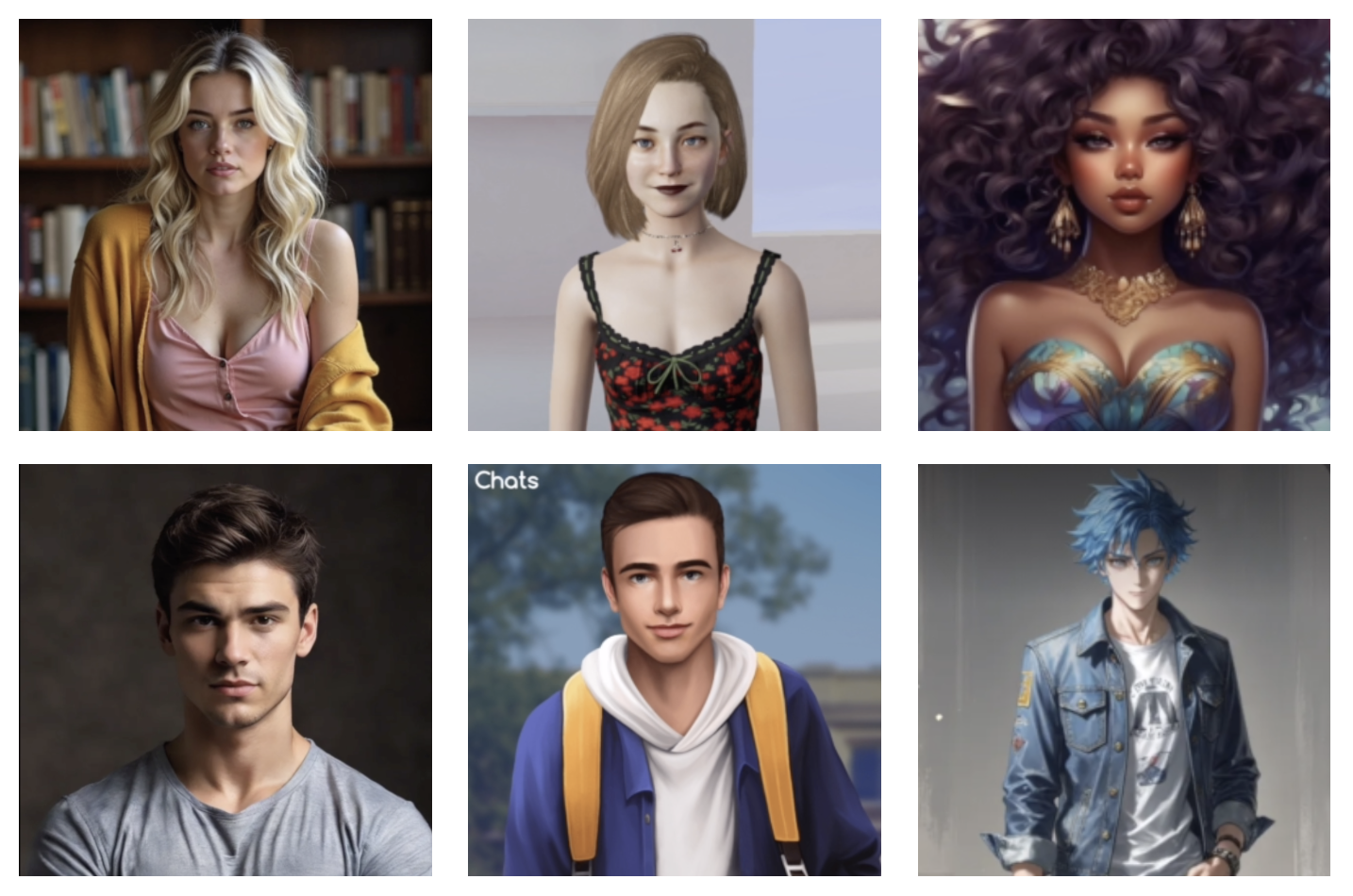

Examining Risks in the AI Companion Application EcosystemNatalie Grace Brigham, Lucy Qin, and Tadayoshi KohnoPreprint, Mar 2026While computer systems that allow users to interact through conversational natural language (i.e., chatbots) have existed for many years, varying types of applications advertising AI companionship (e.g., Character AI, Replika) have proliferated in recent years due to advancements in large language models. Our work offers a threat model encompassing two distinct risk categories: harms posed to users by AI companion applications, and harms enabled by malicious users exploiting application features. To further understand this application ecosystem, we identified 489 unique apps from the App Store and Play Store that advertised AI companionship. We then systematically conducted and analyzed walkthroughs of a stratified sample of 30 apps with respect to our threat model. Through our analysis, we categorize broader ecosystem trends that provide context for understanding threats and identify specific threats related to sensitive data collection and sharing, anthropomorphism, engagement mechanisms, sexual interactions and media, as well as the ingestion and reconstruction of likeness, including the potential for generating synthetic nonconsensual intimate imagery. This study provides a foundational security perspective on the AI companion application ecosystem and informs future research within and beyond this field, policy, and technical development. Content warning: This paper includes descriptions of applications that can be used to create synthetic nonconsensual representations, including explicit imagery, as well as discussion of self-harm and suicidal ideation.

2025

- AIES

Biased AI Outputs Can Impact Humans’ Implicit Bias: A Case Study of the Impact of Gender-Biased Text-to-Image GeneratorsMattea Sim, Natalie Grace Brigham, Tadayoshi Kohno, and 2 more authorsAAAI/ACM Conference on Artificial Intelligence, Ethics, and Society (AIES), Madrid, Oct 2025

Biased AI Outputs Can Impact Humans’ Implicit Bias: A Case Study of the Impact of Gender-Biased Text-to-Image GeneratorsMattea Sim, Natalie Grace Brigham, Tadayoshi Kohno, and 2 more authorsAAAI/ACM Conference on Artificial Intelligence, Ethics, and Society (AIES), Madrid, Oct 2025A wave of recent work demonstrates that text-to-image generators (i.e., t2i) can perpetuate and amplify stereotypes about social groups. This research asks: what are the implications of biased t2i for humans who interact with these systems? Across three human-subjects studies, 1,881 participants engaged in a simulated t2i interaction in which the output was controlled to appear either stereotypic, gender-balanced, or counter-stereotypic, via the ratio of perceived women and men in the output of occupation prompts (e.g., a physicist). We then measured people’s implicit gender bias using a gender-brilliance implicit association task (IAT), a bias that both relates to stereotypic occupation output in t2i and that has implications for women’s representation in different fields. Participants who interacted with neutral t2i output (including only gender-neutral objects, e.g., DVDs) showed relatively high implicit gender-brilliance bias at baseline. Stereotypic t2i output did not increase implicit gender bias relative to this baseline (Study 1). However, participants exposed to counter-stereotypic t2i output had significantly lower implicit gender bias than participants exposed to only gender-neutral output (Studies 1 and 2). Although counter-stereotypic t2i may reduce implicit gender bias amongst users, less than 5% of participants actually preferred the counter-stereotypic representations of women and men. Instead, most participants preferred representations that accurately reflect gender distributions in society or that are more gender-balanced (Study 3). This work demonstrates a novel approach to studying human-AI interaction and reveals important insights for designing generative AI that seeks to mitigate harm. In particular, these findings have implications for understanding the impact of stereotypic t2i on human users, bias mitigation strategies via counter-stereotypic t2i output, and how these impacts (mis)align with people’s preferences for t2i representations.

- USENIX

Analyzing the AI Nudification Application EcosystemCassidy Gibson, Daniel Olszewski, Natalie Grace Brigham, and 5 more authorsProceedings of the 34th USENIX Security Symposium, Seattle, Aug 2025

Analyzing the AI Nudification Application EcosystemCassidy Gibson, Daniel Olszewski, Natalie Grace Brigham, and 5 more authorsProceedings of the 34th USENIX Security Symposium, Seattle, Aug 2025Internet Defense Prize Runner Up, CSAW 2025 Social Impact Winner

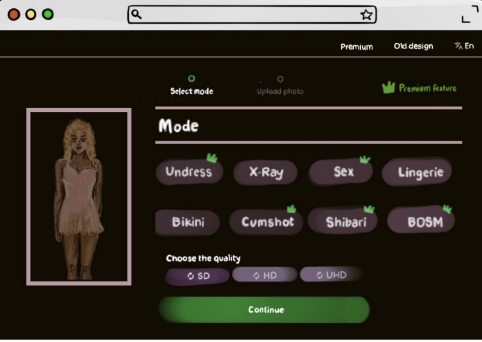

Given a source image of a clothed person (an image subject), AI-based nudification applications can produce nude (undressed) images of that person. Moreover, not only do such applications exist, but there is ample evidence of the use of such applications in the real world and without the consent of an image subject. Still, despite the growing awareness of the existence of such applications and their potential to violate the rights of image subjects and cause downstream harms, there has been no systematic study of the nudification application ecosystem across multiple applications. We conduct such a study here, focusing on 20 popular and easy-to-find nudification websites. We study the positioning of these web applications (e.g., finding that most sites explicitly target the nudification of women, not all people), the features that they advertise (e.g., ranging from undressing-in-place to the rendering of image subjects in sexual positions, as well as differing user-privacy options), and their underlying monetization infrastructure (e.g., credit cards and cryptocurrencies). We believe this work will empower future, data-informed conversations—within the scientific, technical, and policy communities—on how to better protect individuals’ rights and minimize harm in the face of modern (and future) AI-based nudification applications.

2024

- SoLaR @ NeurIPS

Developing Story: Case Studies of Generative AI’s Use in JournalismNatalie Grace Brigham, Chongjiu Gao, Tadayoshi Kohno, and 2 more authorsWorkshop on Socially Responsible Language Modelling Research, Vancouver, Dec 2024

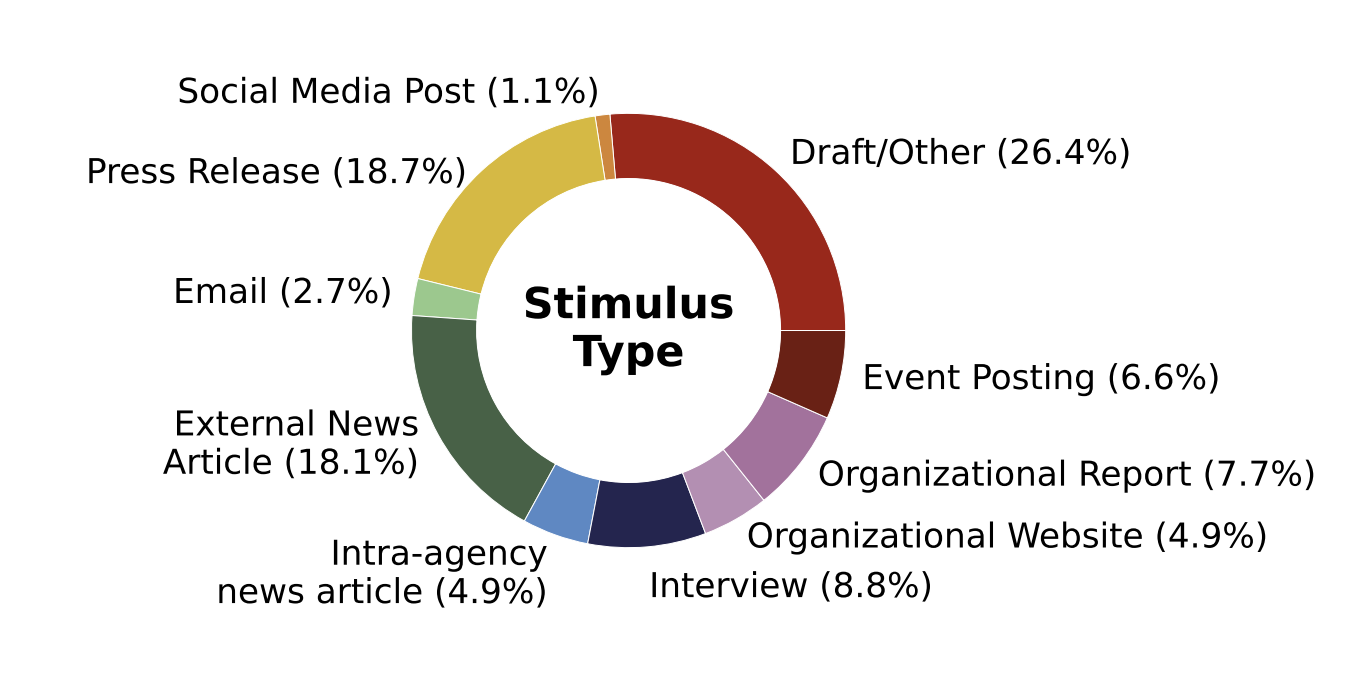

Developing Story: Case Studies of Generative AI’s Use in JournalismNatalie Grace Brigham, Chongjiu Gao, Tadayoshi Kohno, and 2 more authorsWorkshop on Socially Responsible Language Modelling Research, Vancouver, Dec 2024Journalists are among the many users of large language models (LLMs). To better understand the journalist-AI interactions, we conduct a study of LLM usage by two news agencies through browsing the WildChat dataset, identifying candidate interactions, and verifying them by matching to online published articles. Our analysis uncovers instances where journalists provide sensitive material such as confidential correspondence with sources or articles from other agencies to the LLM as stimuli and prompt it to generate articles, and publish these machine-generated articles with limited intervention (median output-publication ROUGE-L of 0.62). Based on our findings, we call for further research into what constitutes responsible use of AI, and the establishment of clear guidelines and best practices on using LLMs in a journalistic context.

- SOUPS

“Violation of my body:” Perceptions of AI-generated non-consensual (intimate) imageryNatalie Grace Brigham, Miranda Wei, Tadayoshi Kohno, and 1 more authorProceedings of the Twentieth Symposium on Usable Privacy and Security, Philadelphia, Aug 2024

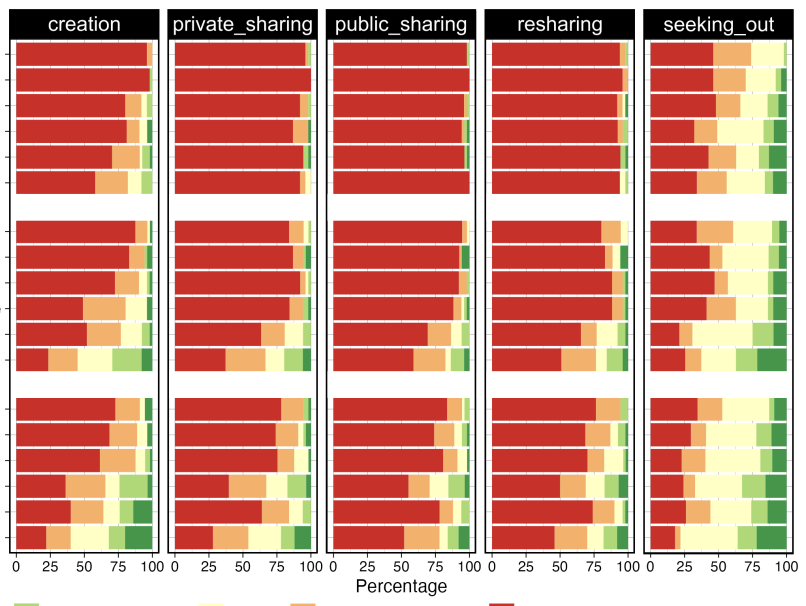

“Violation of my body:” Perceptions of AI-generated non-consensual (intimate) imageryNatalie Grace Brigham, Miranda Wei, Tadayoshi Kohno, and 1 more authorProceedings of the Twentieth Symposium on Usable Privacy and Security, Philadelphia, Aug 2024AI technology has enabled the creation of deepfakes: hyper-realistic synthetic media. We surveyed 315 individuals in the U.S. on their views regarding the hypothetical non-consensual creation of deepfakes depicting them, including deepfakes portraying sexual acts. Respondents indicated strong opposition to creating and, even more so, sharing non-consensually created synthetic content, especially if that content depicts a sexual act. However, seeking out such content appeared more acceptable to some respondents. Attitudes around acceptability varied further based on the hypothetical creator’s relationship to the participant, the respondent’s gender and their attitudes towards sexual consent. This study provides initial insight into public perspectives of a growing threat and highlights the need for further research to inform social norms as well as ongoing policy conversations and technical developments in generative AI.